If you have any kind of presence of the internet, you’ve probably heard about search engine optimization, frequently referred to as “SEO.” It’s an umbrella term that represents a variety of practices, strategies, and techniques that can help a website appear higher up in the search results.

Whenever someone says something like “this website’s SEO could be better,” they mean that the website in question could be doing things differently — whether that’s the kinds of content they have, the code behind their pages, and much more.

At the end of the day, having good SEO means that, when people search for words or strings of words related to topics on your website, your pages will be visible to them.

What is a Search Engine?

Whether you realize it or not, you probably already have plenty of experience with search engines. Using something like Google or Bing to “search” for the answer to a question is a prime example of utilizing a search engine.

On the world wide web, search engines are websites that gather information about other websites and present that information to users in very intuitive ways. Search engines aspire to take user queries — such as “best salmon recipes” — and give users a list of results that best match what they were looking for. When these results are displayed, it is called a “search engine results page,” or “SERP,” and depending on the search, SERPs can take many different forms.

Today, some of the most well-known search engines are Google, YouTube, and Bing.

Many other search engines exist, all employing slightly different techniques to do the best job possible of matching users up with the results that they want. However, Google (as a company) dominates the modern world of search engines, since it owns the two most popular search engines used by English-speaking internet users (Google and YouTube, respectively).

How Search Engines Like Google Work

To a human, the work of search engines can sometimes seem simple.

After all, if you are an avid home cook and a friend asks you about your favorite salmon recipes, you can probably tell them exactly what they want to know with very little effort. For our brains, making these connections between topics and finding answers for certain queries is a relatively easy task.

However, it’s not so simple for search engines, which sift through far more information per second than the average person can comprehend, just to deliver a response to a simple question.

In order to fulfill their task of providing the best results possible for a given user query, today’s search engine companies are constantly evaluating and reworking their algorithms — the inner workings of a search engine. These algorithms sift through hundreds of millions of web pages, looking for specific signals, and ultimately show pages that display the most and the best signals to users.

Only the people who work on these search engines know what these signals are, and how important each one is compared to the others. In fact, with the introduction of machine learning and real-time updates, even these search engineers might not know exactly how the algorithms operate.

However, we do have a pretty clear idea about some of the most important signals and we’ll have a look at those in a moment.

Crawlers

In order to do their jobs well, search engine algorithms need to examine almost every single page on the web. They can learn about all these pages by using crawlers.

Crawlers are computer programs that browse the web, downloading copies of web pages for storage on a search engine’s servers.

However, some pages can be huge, so in order to save space and help things move along more quickly, crawlers will only download a website’s underlying code — the HTML. This is one reason why it’s so important to have clean code that is optimized for search engines.

For all their tricks, however, crawlers won’t know about new pages unless they are led to them. You can help lead crawlers to your new web pages through internal links in your existing content or through external links from other domains. Crawlers will find these links and follow them, discovering new content and downloading updates to your older content.

Search Engine Algorithm Updates

Search engines have a hard job — sifting through millions of pages on the internet and trying to present users with exactly the right pages that fit their interests and answer their questions.

Search engine companies know that they aren’t able to do this perfectly just yet, so they often release updates to their algorithms — fixing bugs, adding new features, and trying to give users a better experience.

Many search engine updates are targeted at black hat SEO practices, which exploit shortcomings in the search engine’s algorithm to place certain websites ahead in search rankings, even when they don’t meaningfully answer questions or adequately serve readers.

These updates can shake things up a lot. When Google, the most widely used search engine in the English-speaking world, updates its algorithm, websites may either see huge boosts or massive dips in traffic, depending on how well their site is optimized for the new algorithm.

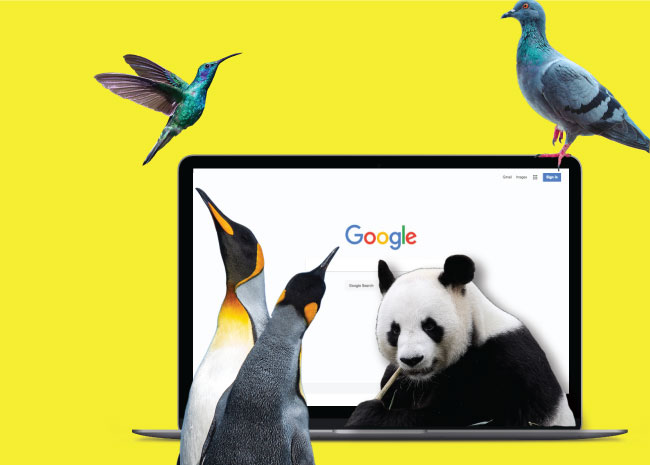

Although Google releases small updates all the time, some large updates have been important enough to earn names. Here are some of the most well-known updates in Google history:

- Panda (2011): The Panda update was one of the first major updates in modern SEO history. Its purpose was to cut down on the effects of spam and so-called thin content in Google’s algorithm. This SEO trend is continued even today, as Google strives to promote high quality content in its rankings.

- Penguin (2012): Before the Penguin update, websites were sometimes able to use spammy backlinks to rank low-value pages in search. Penguin cracked down on low-quality links, punishing sites with manipulative practices and boosting the importance of high-quality, relevant links.

- Hummingbird (2013): Hummingbird helped Google better understand user queries with an increased focus on natural language and context. This update was significant as it made Google much more of a conversational search engine.

- Pigeon (2014): The Pigeon update was a massive overhaul to Google’s local SEO product. It helped to connect users with the most relevant local results for their queries.

- RankBrain (2015): RankBrain is an AI that tries to understand exactly what searchers are looking for when they enter specific queries. This helps Google to understand questions and queries formatted in vernacular more clearly.

- Fred (2017): The Fred update was another blow to low quality content on the web. It lowered the ranking authority of pages with a strong commercial element, such as advertorial content or content that contains a lot of affiliate links.

- Medic (2018): The Medic update earned its name because of how strongly it affected the healthcare industry. However, it also affected many other industries where trust is a big factor. This update helped Google to evaluate the trustworthiness and authority of a given domain on a specific topic, promoting the most authoritative and trustworthy websites to the top of search engine rankings.

Search Engine Optimization Basics

Search engines do their best to think like humans. They want to understand user queries clearly and spit out results that best answer those queries. However, even with all of the updates to search engine algorithms over the years, search engines are still a long way from achieving this goal.

SEO is our way of reaching out to search engines on their level to help them better understand our websites.

Good SEO involves holding a search engine’s hand so it can easily figure out what your website is about and what kinds of questions your pages can answer. There are many ways you can optimize your site to help search engine’s understand your pages, but the two biggest things you can do are lay out clear keyword and topic themes and acquire high quality backlinks to your domain.

Keywords

Search engines can’t understand sentences and questions in the same way that humans can.

If a friend asks you to recommend music that sounds like Mozart, you could give them an answer that never included the words “Mozart” or “classical music.” This is because you both understand the context of the question and the answer, and because you are personally using a sophisticated set of cognitive tools to determine what other kinds of music are most similar to Mozart.

Search engines aren’t as smart as you — they aren't as good at understanding that Beethoven is a similar composer to Mozart because of their shared musical mediums, placement in history, and similar musical techniques (although updates like Hummingbird and RankBrain have made them better).

So if you have a page of composers that are similar to Mozart and you want to rank for queries about music like Mozart’s, you need to hold the search engine’s hand here.

You can hold its hand by including keywords on your pages. In our hypothetical situation, let’s imagine that we want our page to rank for the search “composers like Mozart.” We could include headers like “Beethoven,” “Haydn,” or “Schubert,” but these don’t tell the search engine that we have composers here that are specifically like Mozart. Instead, we need to dumb it down a bit for the search engine and include a header that states very clearly that this page is about “composers like Mozart.” An exact match in one of your headers is often a good idea, as long as it reads well to the user.

This is an easy way to reach out to search engines if you already know which keywords you want to rank for. If you don’t know this, however, you’ll need to perform keyword research and identify which keywords you have opportunities to rank for.

Links and Link Building

Search engines know they aren’t capable of judging a website’s quality all on their own. In order to figure out which websites are authoritative, they crowd-source the rating process.

Search engines do this by counting external links — sometimes called backlinks. These are links from another domain to yours, and each link is sort of like a vote. Not all votes are created equal, however, and links from high authority domains carry more weight.

If your website is receiving a lot of links from other reliable domains, search engines will likely view it favorably. By collecting these “votes” for your website, you can make it easy for a search engine to understand that you’re presenting high quality, authoritative answers to user queries.

External links are like votes, but they’re not the only kind of link.

Remember crawlers — those bots that crawl around the web cataloging pages? They like to follow links, so including internal links to pages on your website is also important. These internal links can lead crawlers to new pages, but they can also help to establish context for some of your existing pages and help search engines understand which pages on your website are the most important.

Since links are so important, link building — the practice of actively working to acquire links for your content — is critical for many websites to improve their search engine rankings.

However, link building can take a dedicated amount of time, and low-quality links can sometimes hurt you more than they help. That’s why it’s important to seek out link building experts who can help you acquire high quality links that will work to improve your rankings.

Why SEO is Important

If you do any sort of business over the web — whether you’re selling products or services online, generating leads, or bringing people in to a brick and mortar location — then you need SEO.

One study found that 51% of a website’s traffic can come from organic search. That’s more than social media, advertising, direct traffic (bookmarks or manually entering a URL into a browser), or referrals (from emails, links on other websites, etc.). Good SEO can help you perform well in organic search, appearing higher on the lists of results that users see.

On the flip side, bad SEO can hurt your performance in organic search. In the best case scenario, bad SEO just makes it hard for search engines to understand what your website should rank for and how much of an authority you are on the subject. In the worst case, black hat SEO practices — whether you’re doing them knowingly or not — can actually cause search engines to give you a penalty and rank you lower for trying to manipulate their algorithm.

How Do You Create An SEO-Friendly Website?

Creating an SEO-friendly website is a balancing act between many different elements. For non-experts, it’s often ideal to look at SEO from a bird’s eye view, rather than getting deep into each individual element.

From this bird’s eye view, we should remember the core principle behind a search engine’s algorithm: to think like a human and give people the results that best answer their queries.

When you practice SEO, try to create a website that a human would want to read. Ask yourself: if a person came to my website with a question about my subject, would one of my pages answer it to their satisfaction?

At the same time, ask yourself if your website can be understood by a rudimentary intellect. For example, would an elementary school student be able to answer questions about what your website is about and would they be able to connect certain pages on your website with certain questions?

Address Real Questions

The first step to creating a website with content that reads well to both humans and crawlers is to find out what people really want to know.

Execute keyword research and learn what questions people are actually asking about your subject matter. Then, create content that answers those questions in-depth and mimics essential language from the queries that searchers are using.

Be Useful to Humans, and Accessible to Bots

Your content should answer real human questions in ways that are useful to humans, but that’s not enough for ranking well on search engines.

Good SEO should maintain everything about your content that makes it interesting to people, while still giving search engine algorithms the tools they need to understand your content.

Ultimately, writing high-quality content that speaks to both humans and bots is a part of any good SEO and content marketing strategy.