Hello and welcome to another Tutorial Tuesday.

Negative SEO has been in the news lately with extortion attempts happening to several large name SEOs, specifically Dejan SEO and Web Gnomes.

Today I want to outline how anyone can easily monitor incoming backlinks to protect your clients or SEO agency against any negative SEO attempts, for free, with Google Webmaster Tools.

But first, a little context….

What is Negative SEO?

Negative SEO is the act of attempting to negatively affect a website’s search engine optimization. Instead of increasing your own site’s optimization for search you work to decrease a competing site’s optimization in search.

The main goal of any negative SEO campaign is to inflict either a manual penalty or an algorithmic filter, specifically Penguin, upon the target site. (Update: Penguin's latest update changed the way the algorithm evaluates spam links - rather than punishing sites with these links, Penguin now devalues those links. This should help combat negative SEO from the algorithmic side.)

How is this possible? With links.

Google was founded on the concept that links from other websites are a vote of confidence. Google used these links as signals of relevance and authority. SEOs were quick to catch on, and eventually a link arms race ensued. This led to spammy, low quality, and manipulative links as the norm.

Google couldn't allow such links to continue to manipulate their search results. It was bad for a variety of reasons, not least because it was too easy to get a bad result to the top of lucrative searches.

In April of 2012 Google rolled out their link spam fighting algorithm, nicknamed Penguin. Penguin works to devalue and even punish low quality, manipulative, and spammy links. There have since been five updates to Penguin, two of which were considered “large,” putting it at Penguin 2.1 by Google’s count.

Every iteration has been stricter on low quality links.

And with over 10 months since the last Penguin update, and no feasible way to recover outside of a data refresh—which only takes place during updates—anyone suffering from Penguin has had 10 months without any hope of reprieve. (Update: Penguin was updated 9/23/2016 - read more here.)

This means that negative SEO, which some are simply referring to as NSEO, has the potential to result in extremely harmful consequences.

Google has said before that they've built protections into their algorithms to thwart NSEO, but most SEOs are skeptical given our experience with Penguin and manual penalty recoveries.

How to Prevent Negative SEO

Negative SEO revolves around links, so the best way to prevent NSEO is by monitoring backlinks to your website. Whenever questionable links flood in, use Google’s disavow tool.

There are a few tools that aid in automatic backlink monitoring:

- Dejan SEO’s Fresh Link Finder

- Monitor Backlinks

- Linkody

- INspyder’s Backlink Monitor 4

- Link Research Tools Link Alert

Backlink Explorers for manual monitoring:

For this tutorial we’ll be focusing on Google Webmaster Tools (now named Search Console), Google’s suite of tools for webmasters, which is free and should already be enabled for everyone working on SEO for their website.

Step One: Navigate to Google Webmaster Tools (Search Console)

Head on over to Google Webmaster Tools and you’ll be directed to the home page with a list of sites you manage.

Simply click your website’s name or image and you’ll head into Google Webmaster Tools dashboard for that website.

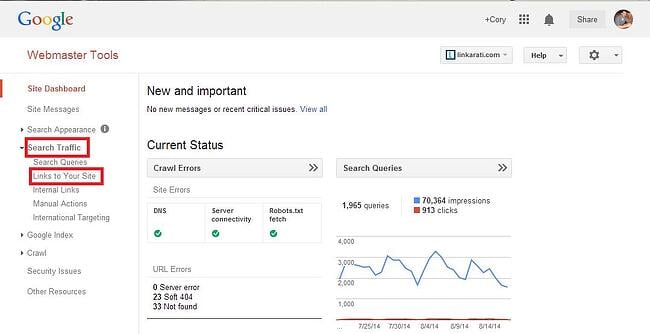

Step Two: Navigate to Search Traffic --> Links to Your Site

You’ll immediately land into your dashboard overview for the website you click into.

Click on “Search Traffic” in the left hand navigation. This will expand a drop down menu, with “Links to Your Site” listed second. Click on this link.

You’ll be taken to a new page:

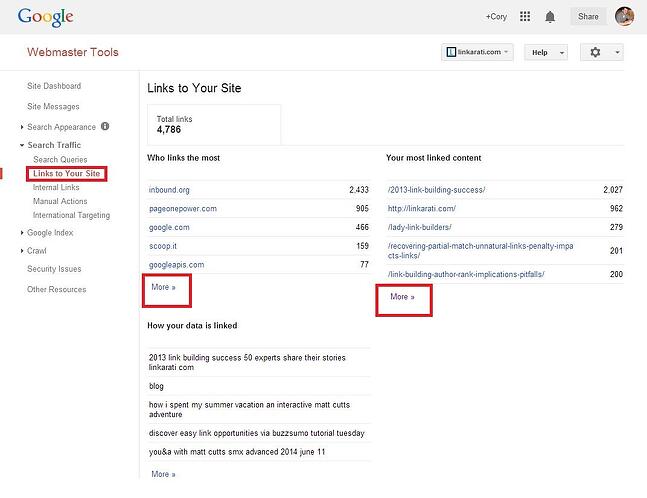

This page serves as an overview for your backlinks. In order to see more detailed information you’ll need to click either “More >>” links beneath “Who links the most” and “Your most linked content.”

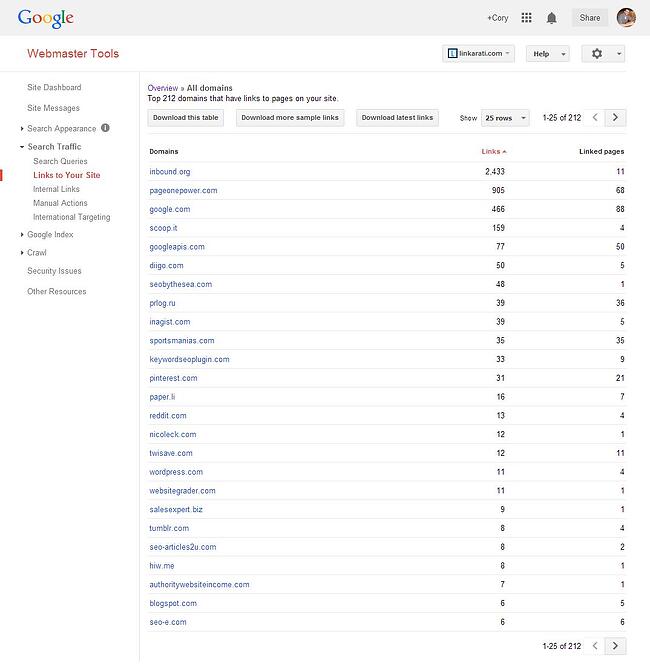

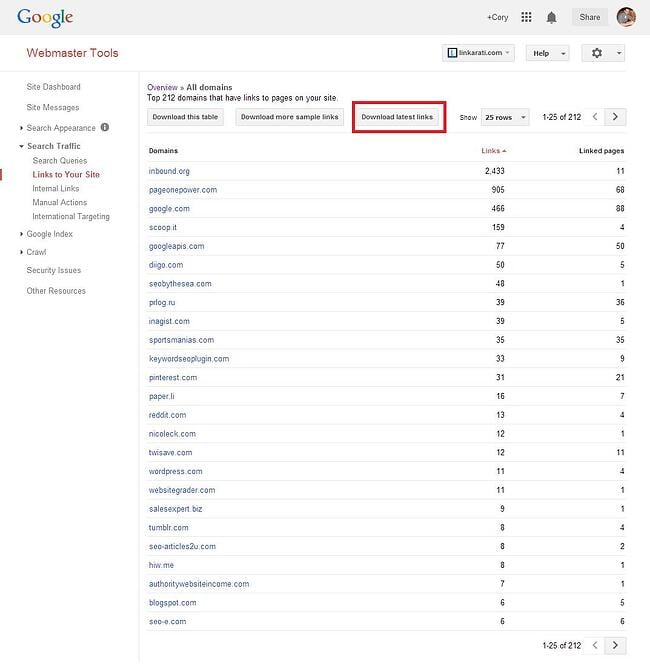

You’ll see this page:

Step Three: Download Latest Links

To get the most useful information from Google Webmaster Tools you’ll need to download the data.

For negative SEO purposes, you’ll want a list of your latest links:

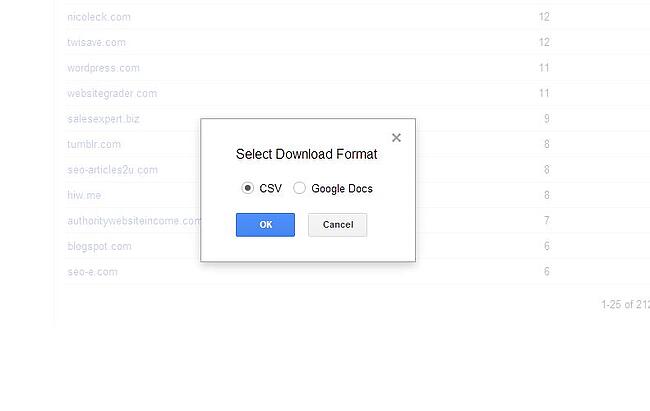

This will pop up an option menu, allowing you to either download to CSV or Google Docs.

Either works fine; your choice depends on whether you want to explore the data in excel or Google Docs.

Worried about negative SEO on your website? We are here to help! Download our free checklist to expand your knowledge on how to integrate SEO into your marketing to combat negative SEO.

Let’s look at it first in Excel.

Step Four (A): Latest Links in Excel

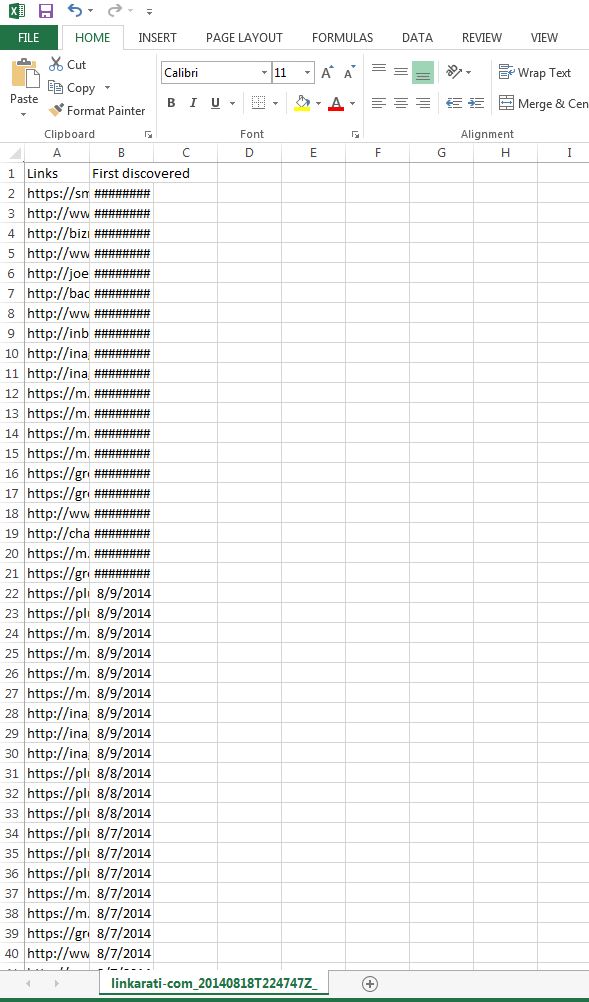

Once you choose to download your website’s latest links in CSV you’ll see a download bar pop up in the bottom of your browser.

Once the download is finished click the file and it’ll open up in excel (assuming you have and use excel on your computer – if not, make sure to use the Google Docs option).

This will bring up the file in Excel, which will look roughly like this:

Don’t be alarmed by the ####### - simply click into one of the columns to reveal the data. The data will be automatically sorted by most recent links to oldest.

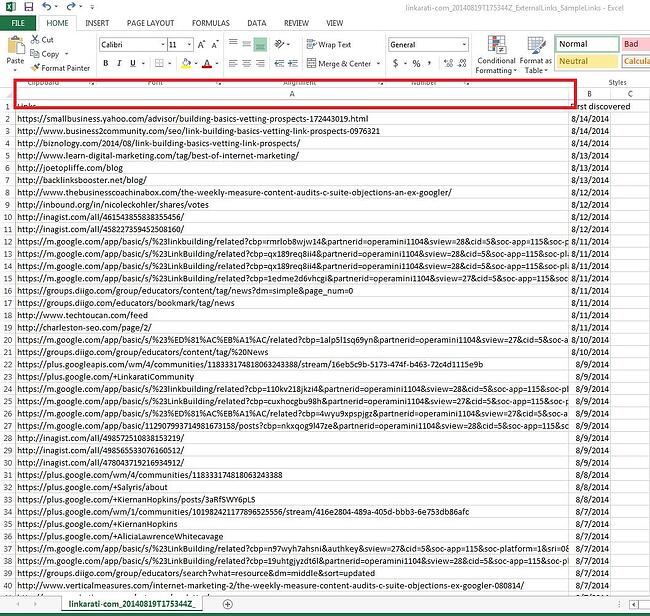

I recommend expanding column A so you can fully see the URL path of the website linking:

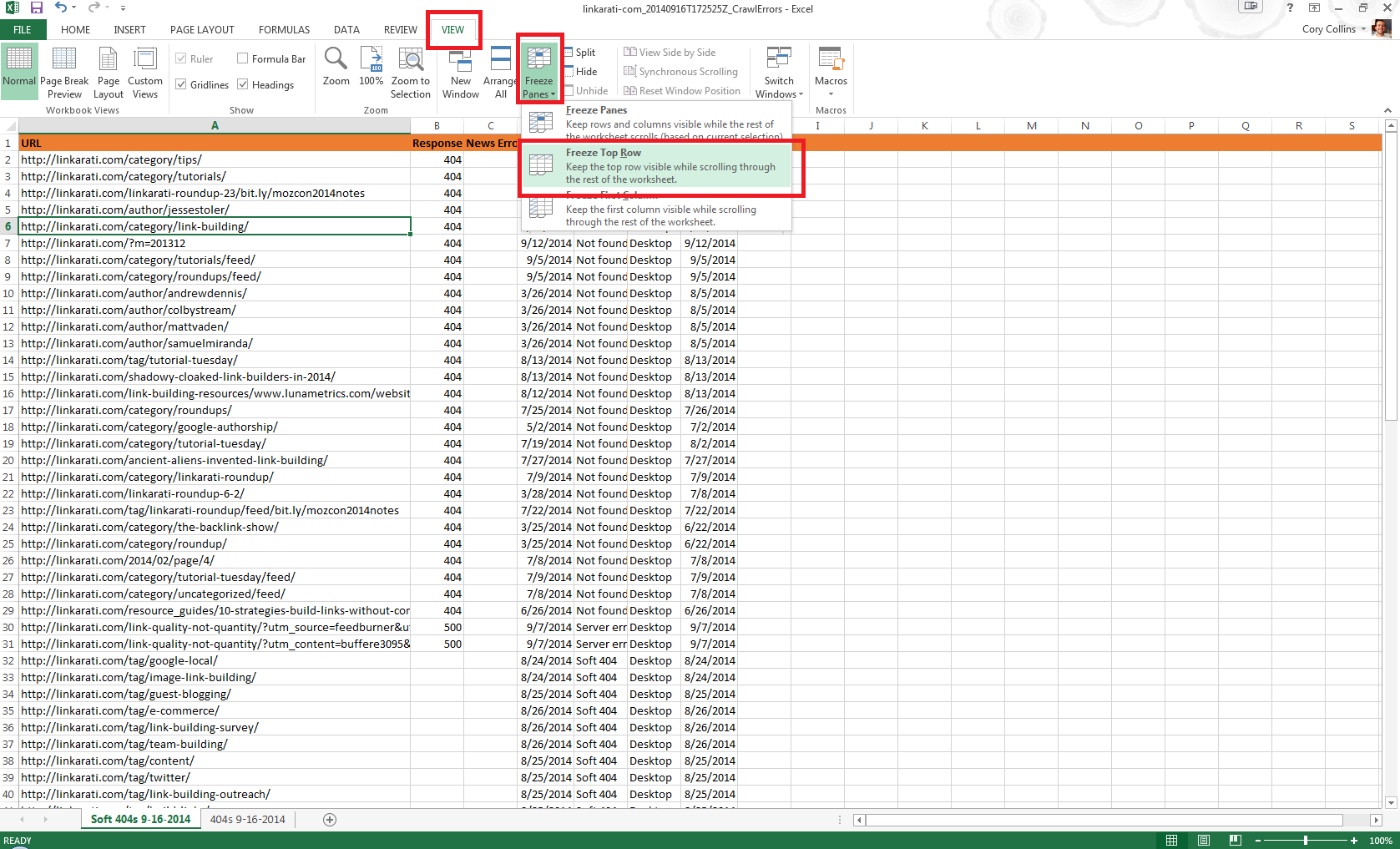

For ease of use, you should Freeze row one and highlight the entire row to make it stand out. Simply head into the View tab, choose Freeze Panes, and select Freeze Top Row.

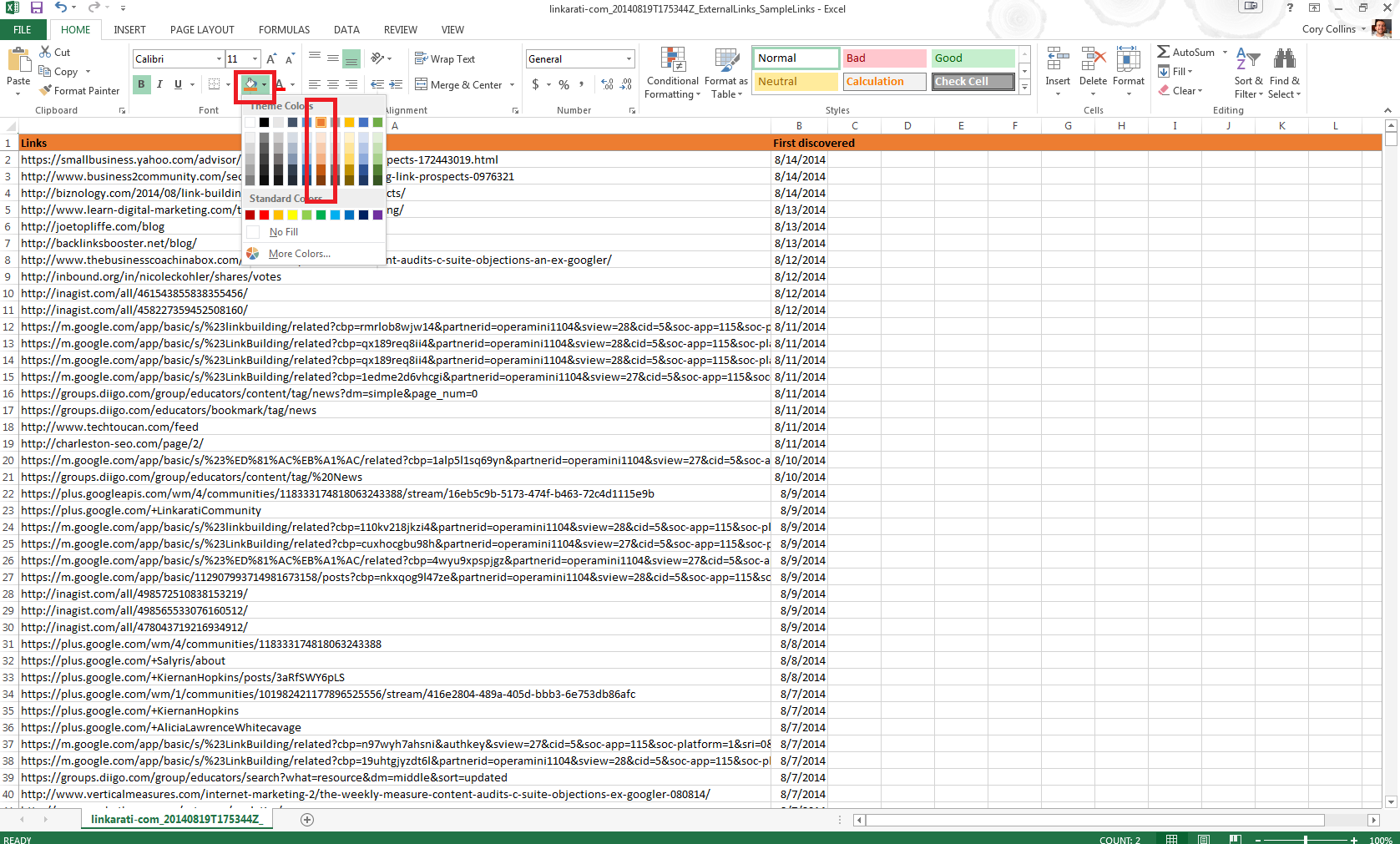

All that’s left is to make the top row stand out from the rest. I’d recommend making orange the background color:

Now it’s time to dive into the data.

Step Four (B): Latest Links in Google Docs

Google Docs are great for collaboration or simply accessing your data from multiple devices. Google Docs is currently free to all Google users, and can be accessed through the cloud with multiple devices.

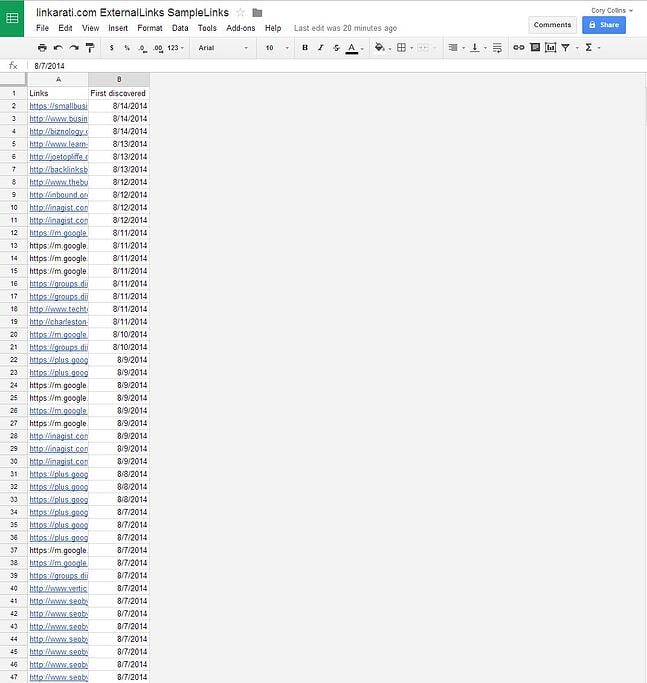

Here’s what your list of latest links will look like in Google Docs:

I recommend expanding Column A, Freezing the Top Row, and bolding it to make it stand out.

Simply navigate to “View,” “Freeze Rows,” and “Freeze 1 row.”

Now you’re ready to review the data.

Step Five: Reviewing the Data – First Discovered

You now have your recent links displayed in front of you, organized into an easy to review fashion. All that’s left is to review.

Google Webmaster Tools provides us with two points of data: First discovered (date), and Links (the full URL path of the page linking).

What you’ll want to look at first is First Discovered. The date the link was discovered is important for two reasons:

- Negative SEO tends to flood hundreds if not thousands of low quality, spammy links at a time.

- It’s easier to review the date the link was made than to check every single link itself for quality.

This means that date discovered provides us with a good threshold test. Any unnatural patterns of links all found on the same date will mean that the link velocity, or the number of new links pointing to your site, is unnaturally high and could be the result of a negative SEO attack.

You should be able to skim through your list of recent links and quickly spot any unnatural patterns of link growth. If you notice any periods of high link growth, you’ll want to jump review the links.

Step Six: Reviewing the Data – Link URL Path

Once you've found a batch of links you believe suspicious—or even potentially the result of negative SEO—you’ll want to review the page they’re located on.

This means clicking on the link under column A, the “Links.”

There are a few things to keep in mind here:

- False positives

- Link quality

- Relevance

- Link Type

- Authority

- Location

- Quality Assurance

False positives will be the quickest to rule out. Here you’re looking for pages that actually don’t contain your link, even though Google reports that they are.

This can happen for a variety of reasons:

- Sidebar widgets such as “recent comments”

- Updating newsfeeds

- New sitewide links

- Duplicate content issues.

What often leads to a false positive is when you gain a new link that’s across multiple pages on a website for a limited amount of time, but Google crawls all of those pages and indexes the links, thereby attributing new links to hundreds or even thousands of pages.

Most of the time those links aren't harmful. A perfect example is a comment link that’s natural, but featured in a sidebar “recent comments” widget. As Google crawls the entire site it will attribute a new link on each and every page that also displays the widget. In reality that link will live on one page and one page only after a short period of time.

When you should consider using the disavow tool:

- Scrapped content/scrapping sites

- Unnatural sitewide links

- Low relevance

- Thin content

- Bad link neighborhoods.

Trust your instincts. Look at the site itself to gauge whether or not the link is natural. If it’s negative SEO the links will look immediately bad, low quality, manipulative, or just plain unnatural.

Common links used in negative SEO are forum signature spam, blog comment spam, web 2.0 links (one page WordPress, Blogger, Tumblr subdomains), unnatural sitewide links, etc.

Recap

Negative SEO has the potential for serious harm.

Whether the result is a manual penalty or a Penguin hit, it will take time, energy, and resources to recover your lost traffic.

Google has made assurances that they have processes in place. However, this is an evolving issue, and you can’t trust your site's protection purely to Google. Manually reviewing your incoming links is extremely easy, and negative SEO should be simple to spot.

The point of negative SEO is to drop a completely unnatural amount of spam links to the targeted domain. If you suspect someone is targeting your website, you should be able to catch attempts through Google Webmaster Tools in a matter of minutes.

And don’t forget there are paid options as well.